At a recent CEO breakfast I hosted for leaders across health and community services, we heard governments want NGOs to clearly account for how public funds are being used and what impact they are delivering. Boards are asking harder questions. Commissioners are tightening specifications.

That is all reasonable.

It made me think about how we decided to performance manage a new statewide program I was involved in commissioning years ago.

We had implemented a new service delivery model across 33 locations. Different communities. Different capabilities. Different local pressures. Under the usual approach, each service would have been issued clear performance targets from day one and held tightly to them.

Instead of following the usual path, we made a deliberate choice.

The investment specifications defined the intent, scope and boundaries. They were the guardrails. If someone walked into the service in one town, they could expect a similar range and scope of services as in another.

We decided not to lock in fixed performance targets at the outset.

Instead, every quarter, we produced comprehensive benchmarking reports across all services. Every provider saw everyone else’s data. We came together as a strategic implementation group and looked at the reports with curiosity. What was working? Where were the barriers? What patterns were emerging?

When performance issues surfaced, we brought together the regional contract manager, the peak body and the statewide commissioning team with the service provider. Not to enforce first, but to understand.

Was perceived underperformance within their control? Or was something in the system constraining performance?

Those decisions paid off, and in the first measurement cycle, escalation into more intensive statutory responses reduced at a statistically significant level across the state. Upstream intervention was working. Over time, we realised that data governance matters as much as design discipline.

We also learned that because everyone saw everyone else’s data, improvement was driven by insight, not enforcement.

Why this matters for leaders

When we’re under scrutiny, the instinct is to tighten control, be more specific about targets and require more detailed reporting, leaving less room for variation.

In some contexts, fixed targets are appropriate, but if accountability is only designed to ensure firm control, it shrinks adaptive capacity.

In complex service systems, underperformance is rarely a single-organisation issue. It sits at the intersection of local context, workforce capability, system design and commissioning assumptions.

When reporting cycles are designed only to check compliance, you get submission. But, if they are designed to surface patterns and trigger joint problem-solving, you get system improvement.

Most innovation doesn’t die in the design phase. It dies in the first performance review meeting.

When you over-specify outputs too early, you risk locking in mediocre performance at scale, narrowing the field of learning just when the system most needs to adapt. The challenge is to design and manage implementation, so both accountability and innovation can operate at the same time.

Research on Complexity Leadership Theory by Uhl-Bien, Marion and McKelvey (2007) shows that high-performing systems rely on the interaction between formal administrative structures and what they describe as “adaptive space”.

As Uhl-Bien says:

Adaptive space isn’t something you declare in a strategy document. It is created or closed down in the way performance is reviewed and acted on. Administrative systems provide stability and oversight. Adaptive spaces allow experimentation and cross-boundary learning. If one dominates the other, performance suffers.

You need both safety and lift

Think of it as guardrails and runway.

Guardrails provide safety within defined boundaries. They protect public value and ensure a minimum level of consistency.

The runway provides space for lift. It allows different approaches to respond to local conditions and emerging insight.

You can design guardrails that allow flexibility. But if implementation focuses only on tightening targets and enforcing uniformity, the runway disappears.

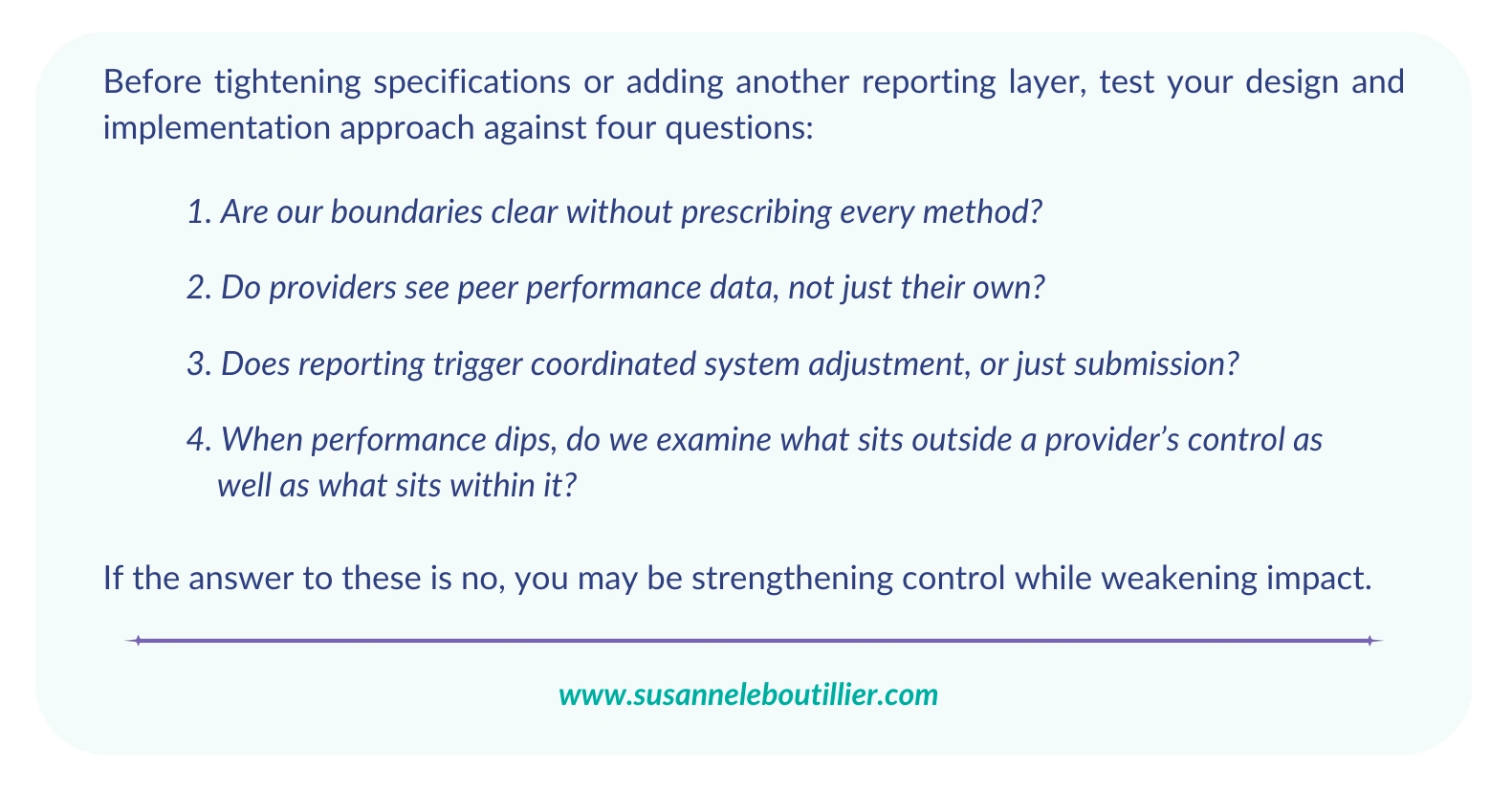

Accountability Design Filter

If you want impact over pure compliance, start here

- Where have we tightened specifications in response to scrutiny, and what has that done to adaptive capacity?

- What assumptions are we making about certainty that our operating environment does not support?

- Is our accountability model optimised for audit comfort, or for measurable impact over time?