We keep blaming AI for weakening critical thinking at work. I think that’s too convenient.

AI is accelerating a problem leaders have been building for years: organisations full of people trained to deliver simplified answers instead of sound judgement.

I’ve had a phrase stuck in my head.

“You reap what you sow.”

I was reading research on how often Google’s AI Overviews get things wrong.

The memory it pulled up was older.

Early in my career, I worked in the public service with a senior politician who wanted to talk to the community about a cluster of complex, interrelated issues in a way that did not reduce them to slogans.

Then a major scandal broke, followed by a systems review and a commission of inquiry. What had looked like the beginning of a different kind of public conversation turned into a crisis management conversation, and the plan fell away.

We trained ourselves out of thinking

If you lead in government, healthcare, community services, education, regulation, or any organisation where poor judgement has real consequences, this is not an abstract AI debate. It is a leadership risk.

Somewhere along the way, leaders in complex systems were told their job was to be understood in five seconds. The mantra was: could someone in Year 5 follow this?

It was meant as a check on jargon and became something else.

A generation of politicians, boards, executives, and public servants learned to communicate in ways that did not invite anyone to think, because the message had been pre-simplified to the point of not asking them to.

The result is the system we now live in. A broad base of people who have been discouraged from thinking for themselves, because the people leading them stopped expecting them to.

That is the “reap what you sow” part. A good deal of where we are in the world right now, politically, globally, is downstream of that choice.

We get upset when people do not think critically. Yet, we have not given them the information, the support, or the practice to do it.

AI is the accelerant

At the request of The New York Times, an AI startup called Oumi ran 4,326 Google searches through SimpleQA, a benchmark designed to test short, fact-seeking answers. They tested Google’s AI Overviews twice. In October on Gemini 2, and again in February after Google upgraded to Gemini 3. Overall accuracy improved from 85% to 91%.

But the numbers underneath that caught my eye. In October, 37% of the answers rated correct were not fully supported by the sources Google cited. In February, that figure rose to 56%. The answers were more often right overall. But they were also harder to verify from the citations sitting underneath them. The system was getting better at being confidently wrong.

Google has publicly disputed the analysis, saying it had serious holes and did not reflect how people actually search. But even on that view, the issue is not only whether the answer is right. It is whether people are doing enough thinking to know when to question it.

I am not above this. I use AI. I’ve caught myself letting it do some of my thinking for me. Every time I notice, I pull back and recommit to using it as a thinking partner. Handing the thinking over would be easier.

If I am catching myself doing it, so are the people in your organisation.

Judgement is built through consequences

Those of us who came up through the ranks before AI had an advantage we did not fully appreciate at the time. We did the grind and fell into all the little hidden traps. We had things go wrong and had to live with the consequences.

That is how judgement gets built. Through mistakes that cost you something, and a mentor close enough to help you see what happened and why.

It is the same reason most people who learned to drive before sat-nav can usually find their way home when the phone dies. Following a confident instruction is not the same skill as reading the road.

The next generation coming up through workforces are inheriting what sounds like progress, and in many ways is. Until you look for the place where judgement used to get built and find it has been removed.

If the mundane is gone, and no one is deliberately creating the conditions for critical thinking, what is left is people who can get fast answers but not tell when the answer is wrong.

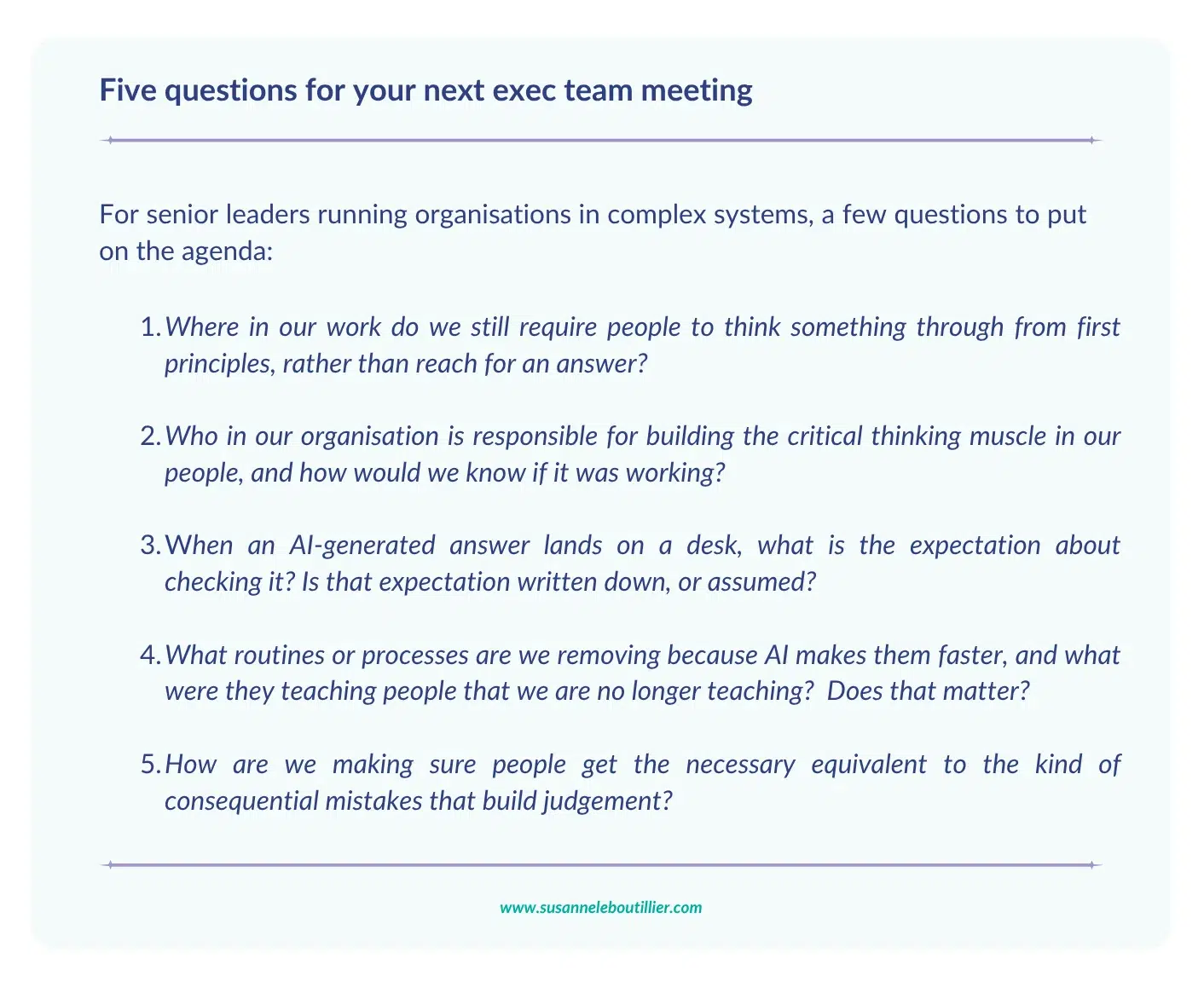

These are not questions with tidy answers. They sit in the ambiguity most of us are used to working in. The organisations that benefit most from AI over the next three years will not be the ones that automate the fastest. They will be the ones that still teach their people how to read the road, not just follow the instruction.

This is the conversation I am increasingly having with boards and leadership teams and taking into speaking rooms.

Because the real risk is that organisations forget how to recognise when AI’s answers are wrong.